AI-Powered Development.

Locally Hosted.

Fully Yours.

A self-hosted development orchestration platform that turns feature requests into working code through a multi-phase AI pipeline - with safety reviews, auto-fix loops, and full audit trails.

Your code never leaves your network

Review before every execution

Evidence ledgers for every run

Open-source, runs on your hardware

Built with

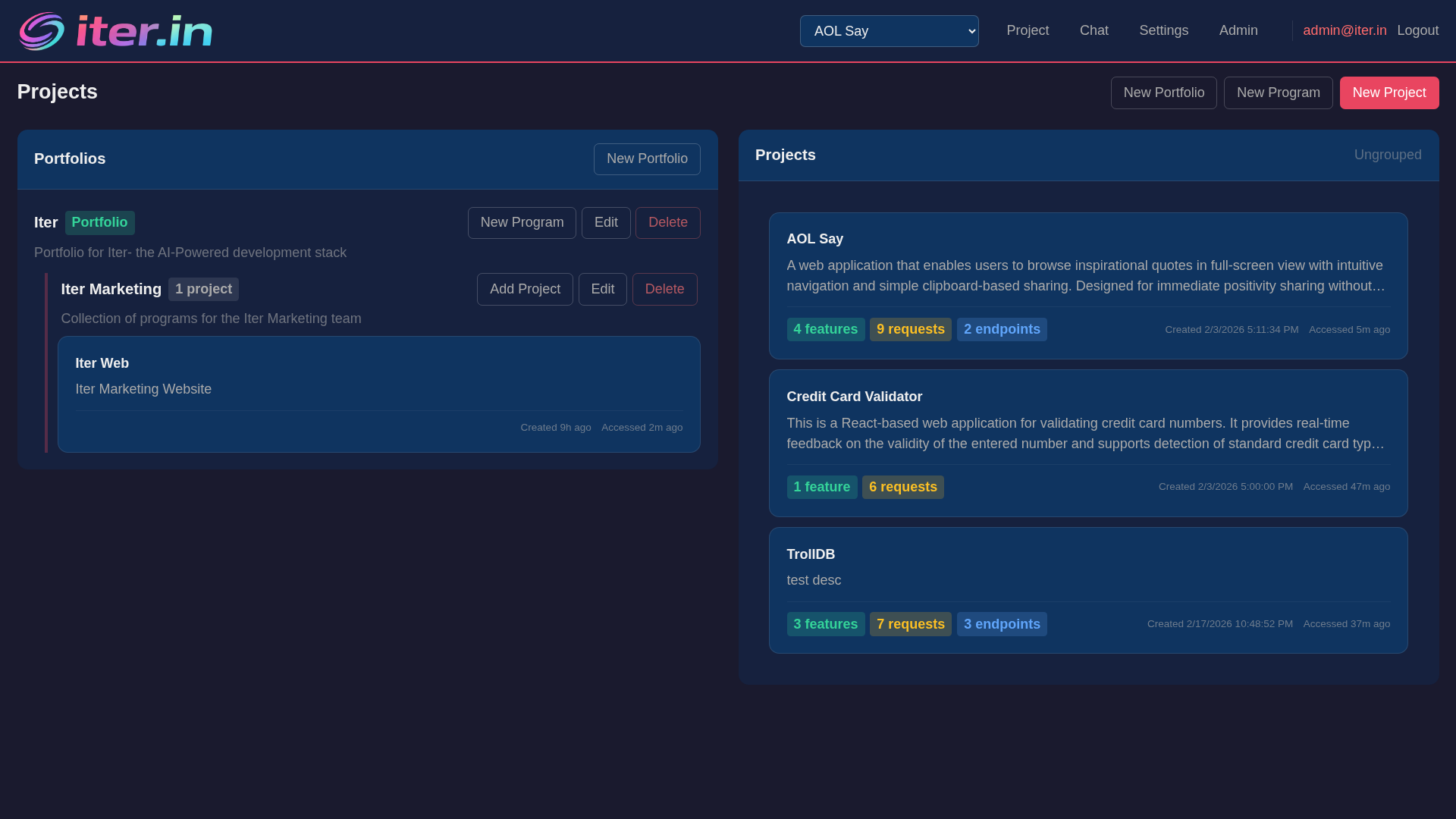

Development orchestration that you control

No cloud dependency. No vendor lock-in. Run on your hardware with your models.

Self-Hosted AI

Run entirely on your infrastructure with local Ollama models. Your code never leaves your network. Multi-host model routing across your GPU fleet.

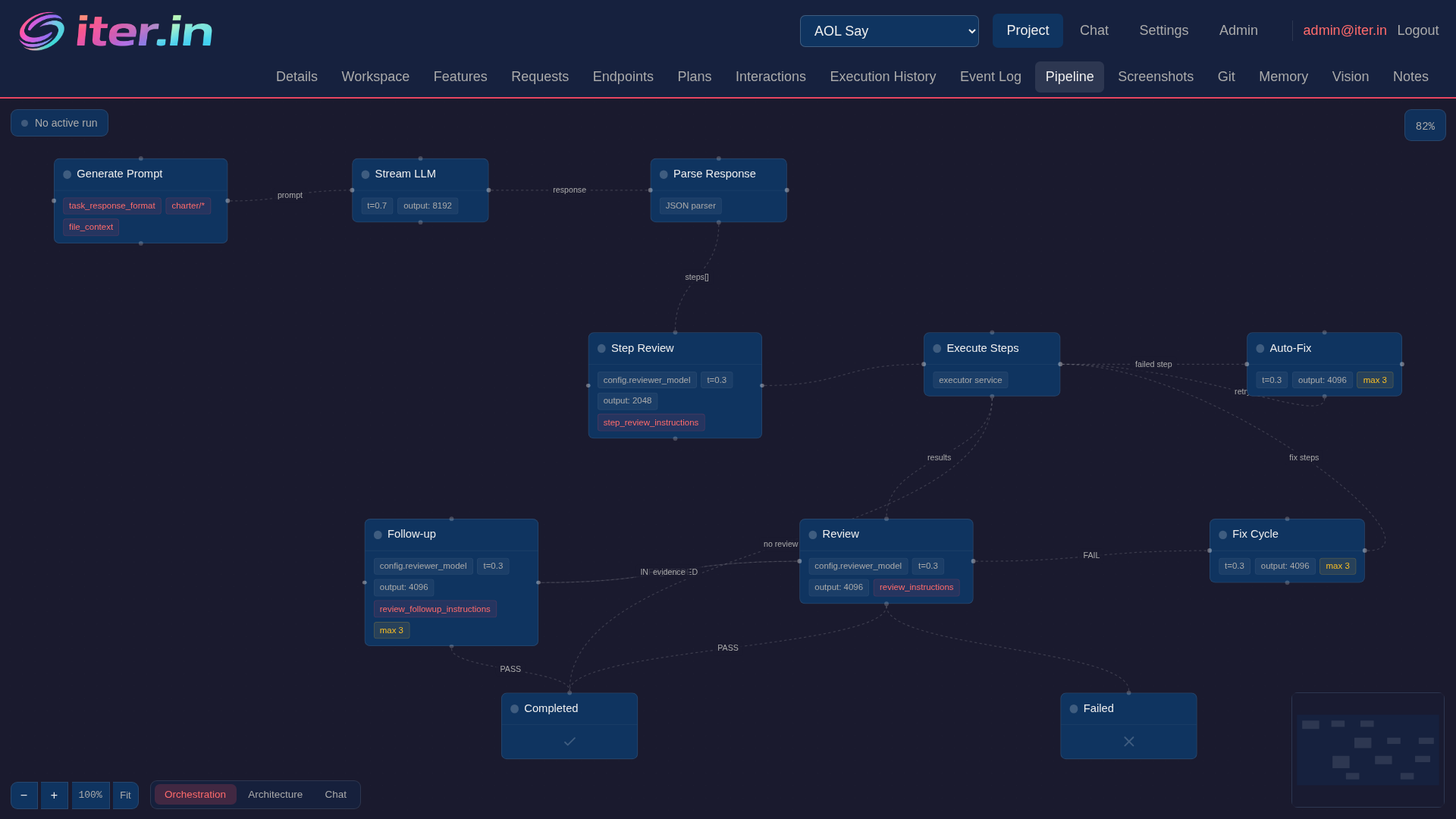

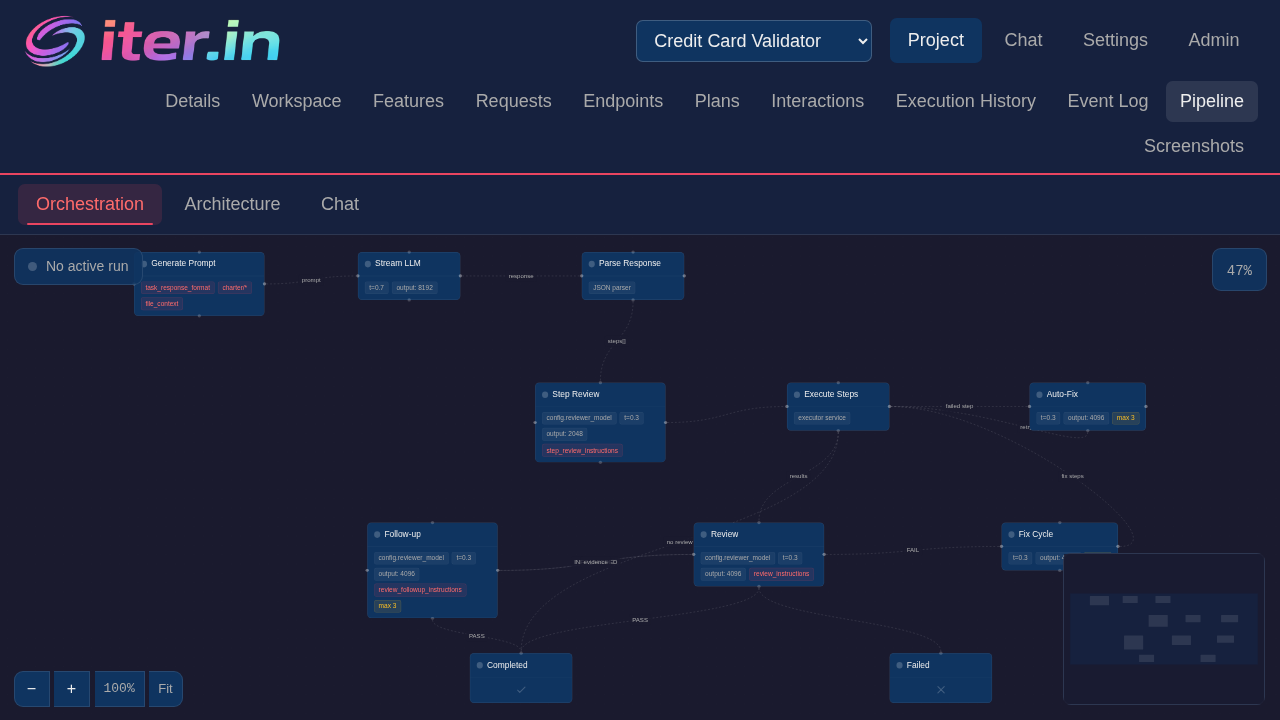

Multi-Phase Pipeline

Prompt → Stream → Review → Execute → Verify. Every change goes through structured phases with safety gates between each step.

Quality Gates

8-point pre-execution safety review, post-execution verification against acceptance criteria, and automatic fix loops when things go wrong.

Multi-Phase Orchestration Pipeline

Every feature request flows through a structured pipeline: context-aware prompting, LLM streaming with structured output, pre-execution safety review, isolated execution, and post-execution verification.

- Context-rich prompts with project state and file contents

- Grammar-constrained LLM output for reliable parsing

- Isolated command execution in sandboxed containers

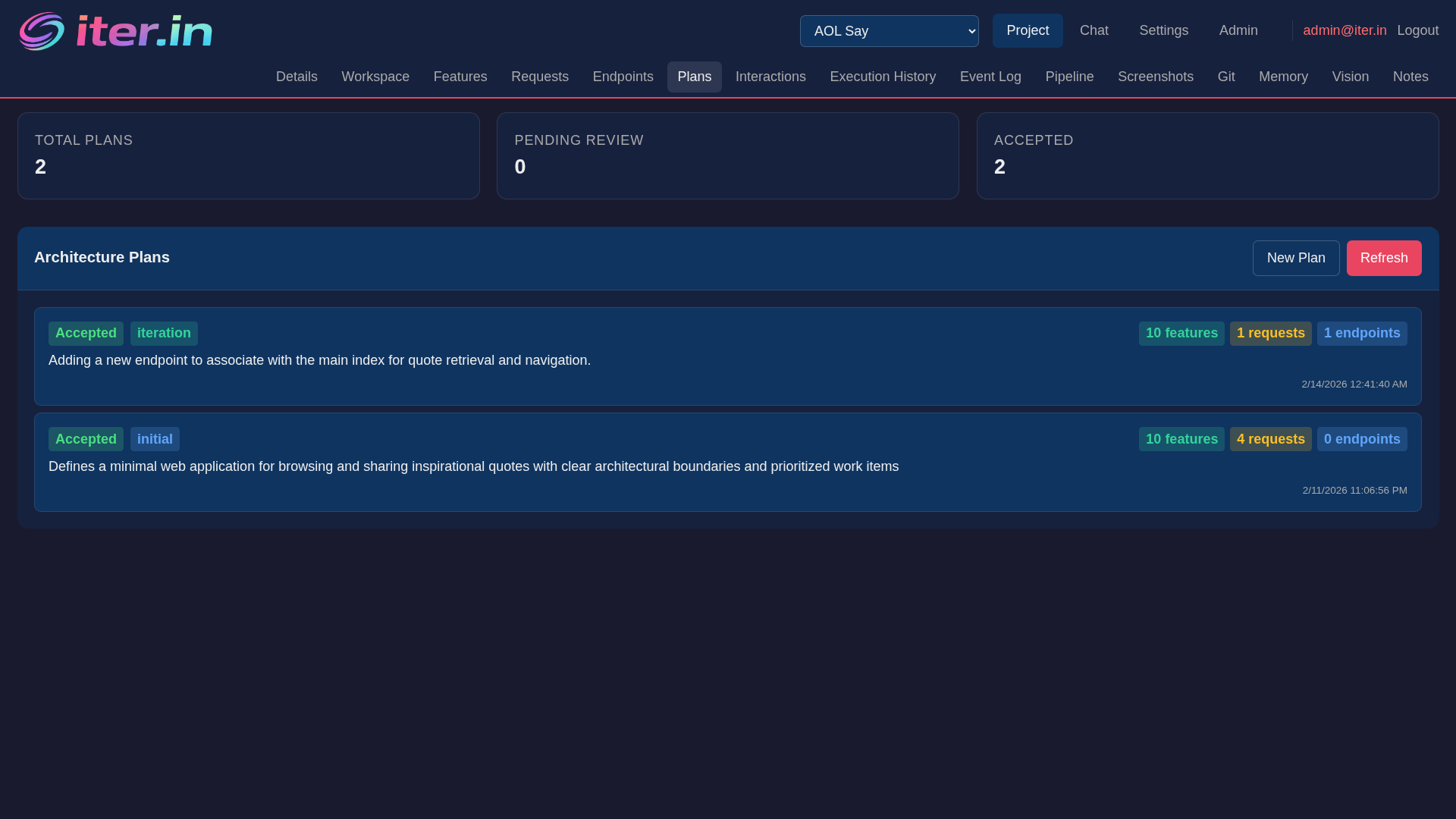

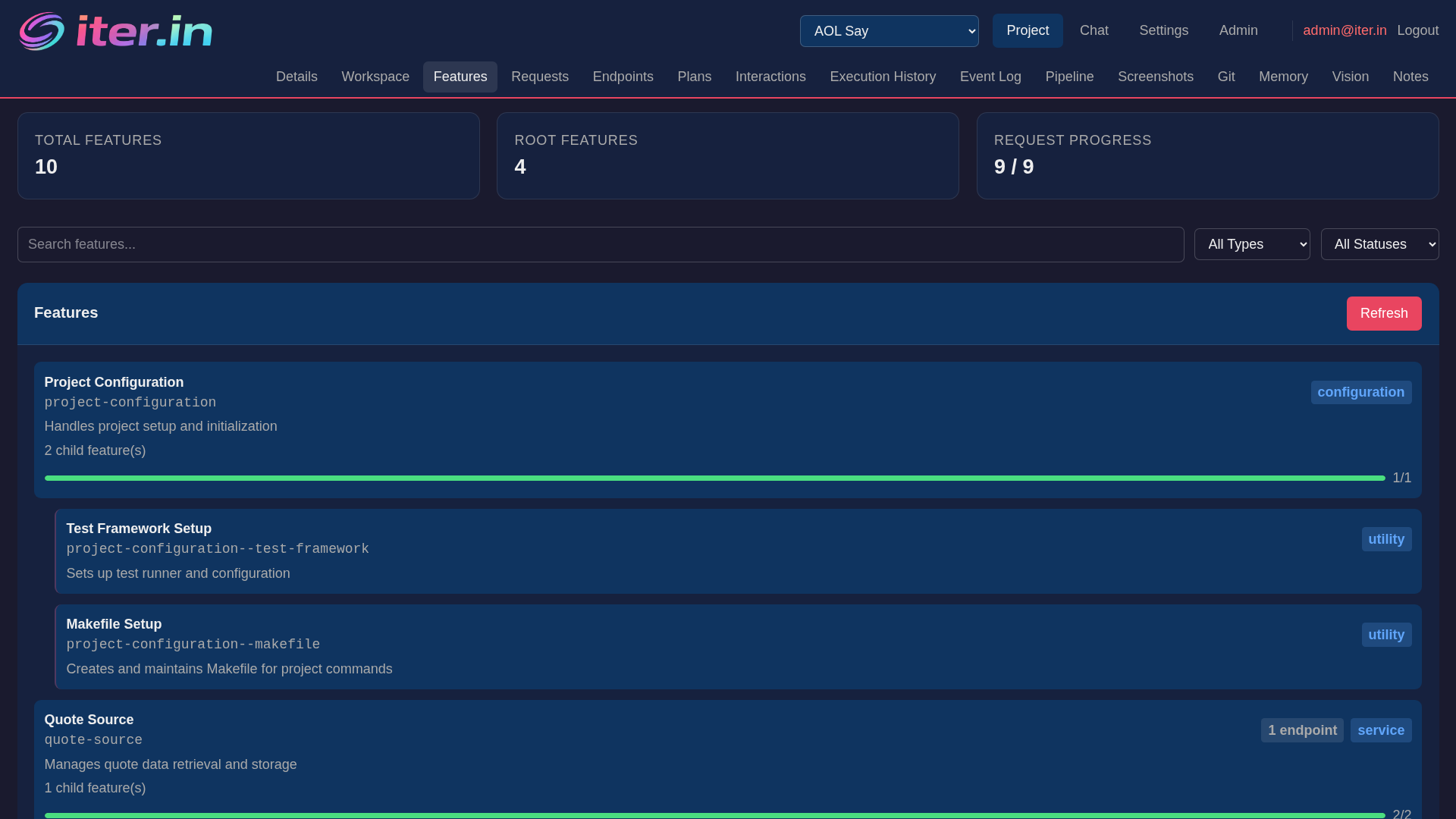

Architecture-Aware Planning

Iter understands your project structure. Feature hierarchies, endpoint maps, and dependency tracking give the AI context to make architectural decisions that fit your codebase.

- Feature hierarchy with parent-child relationships

- Endpoint registry with method, path, and feature mapping

- Affected file tracking for targeted context loading

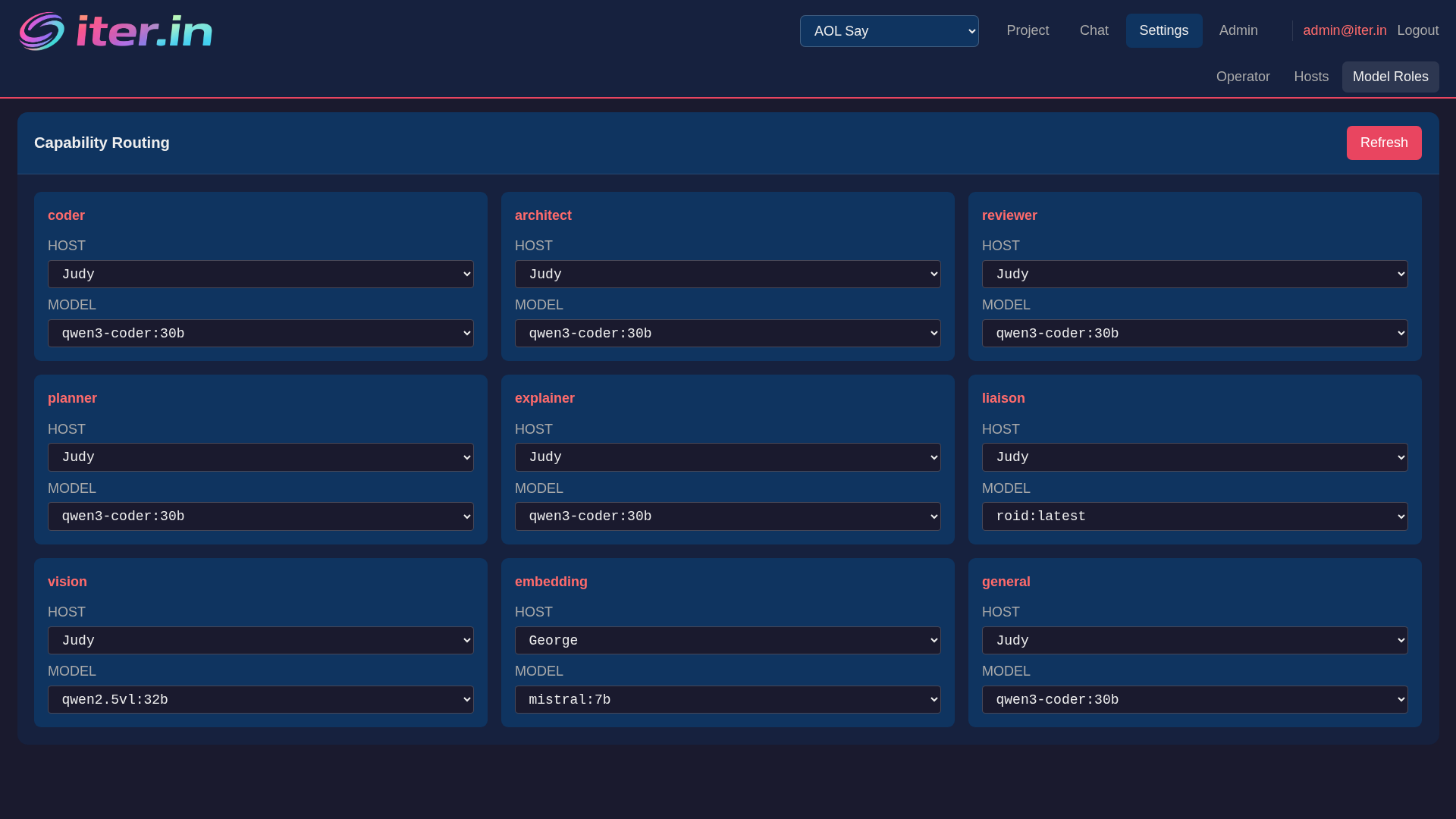

Multi-Model Routing

Route different tasks to different models based on capability. Use your fastest model for code generation, your most capable for architecture reviews, and your cheapest for validation.

- Capability-based routing: coder, reviewer, architect, planner

- Multi-host support across your GPU fleet

- Automatic fallback chains when models are unavailable

Auto-Fix Loops

When a step fails, Iter automatically generates a fix prompt with full pipeline context - previous attempts, error output, and file state. Fix children are injected into the step tree and the original step is re-verified.

- Up to 3 fix attempts per step with full error context

- Fix prompts include pipeline state and run history

- Automatic re-verification after successful fixes

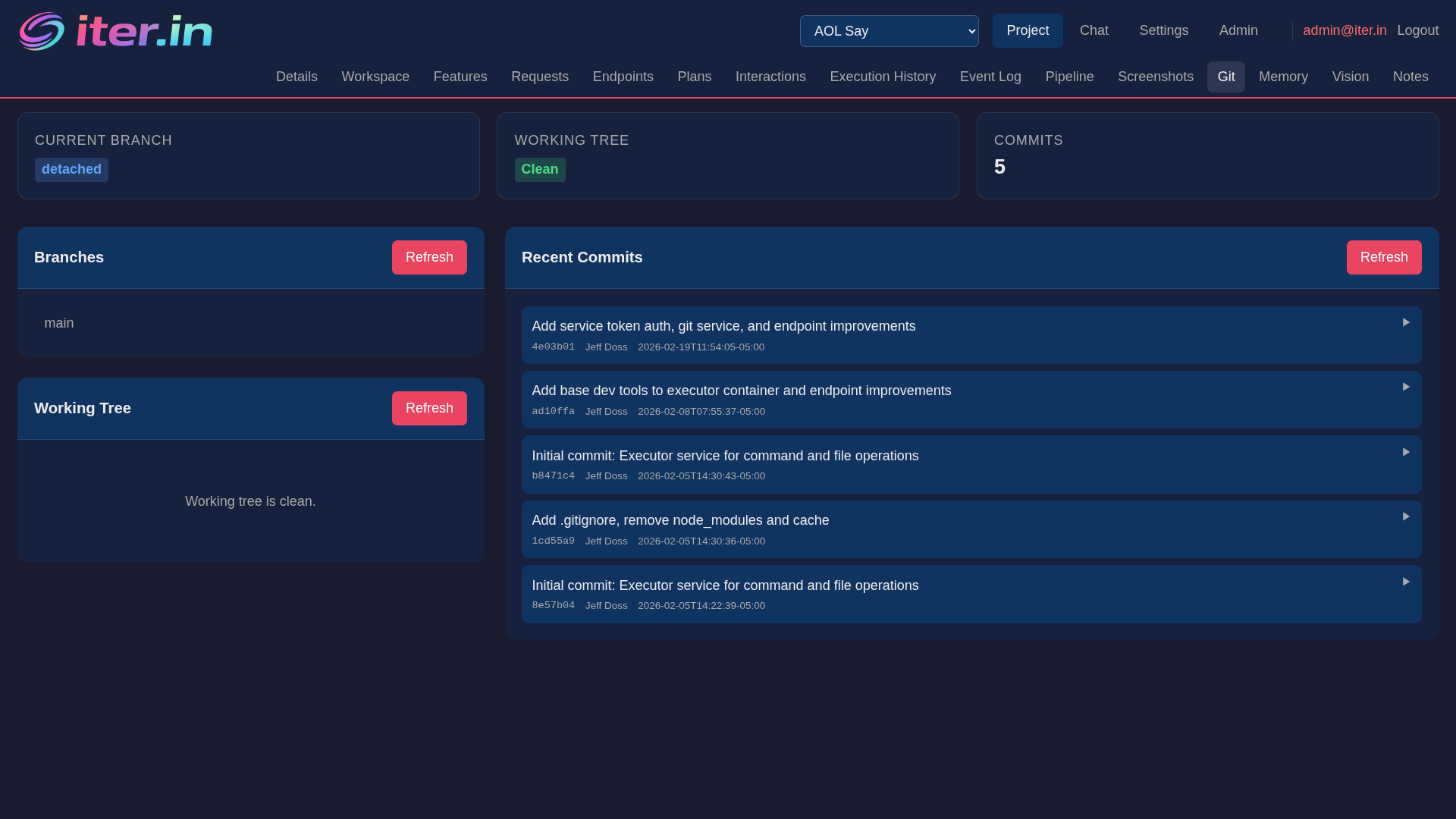

Automatic Git Feature Branches

Every feature request gets its own git branch. Iter commits at each pipeline phase and safely merges to main when the review passes - rebase + fast-forward for a clean, linear history.

- Branch-per-request with auto-generated names from descriptions

- Phase-based commits with request ID and run metadata

- Safe rebase-and-merge with conflict detection and preservation

RAG & Semantic Search

ChromaDB-powered vector search across your entire project. File ranking by semantic relevance, memory-based error recovery, and intelligent file context enrichment for feature requests.

- Semantic search across project files, memories, and error patterns

- Hybrid error matching: regex + vector for automatic fix recall

- Vector pre-filtering narrows 500+ files to the 30 most relevant

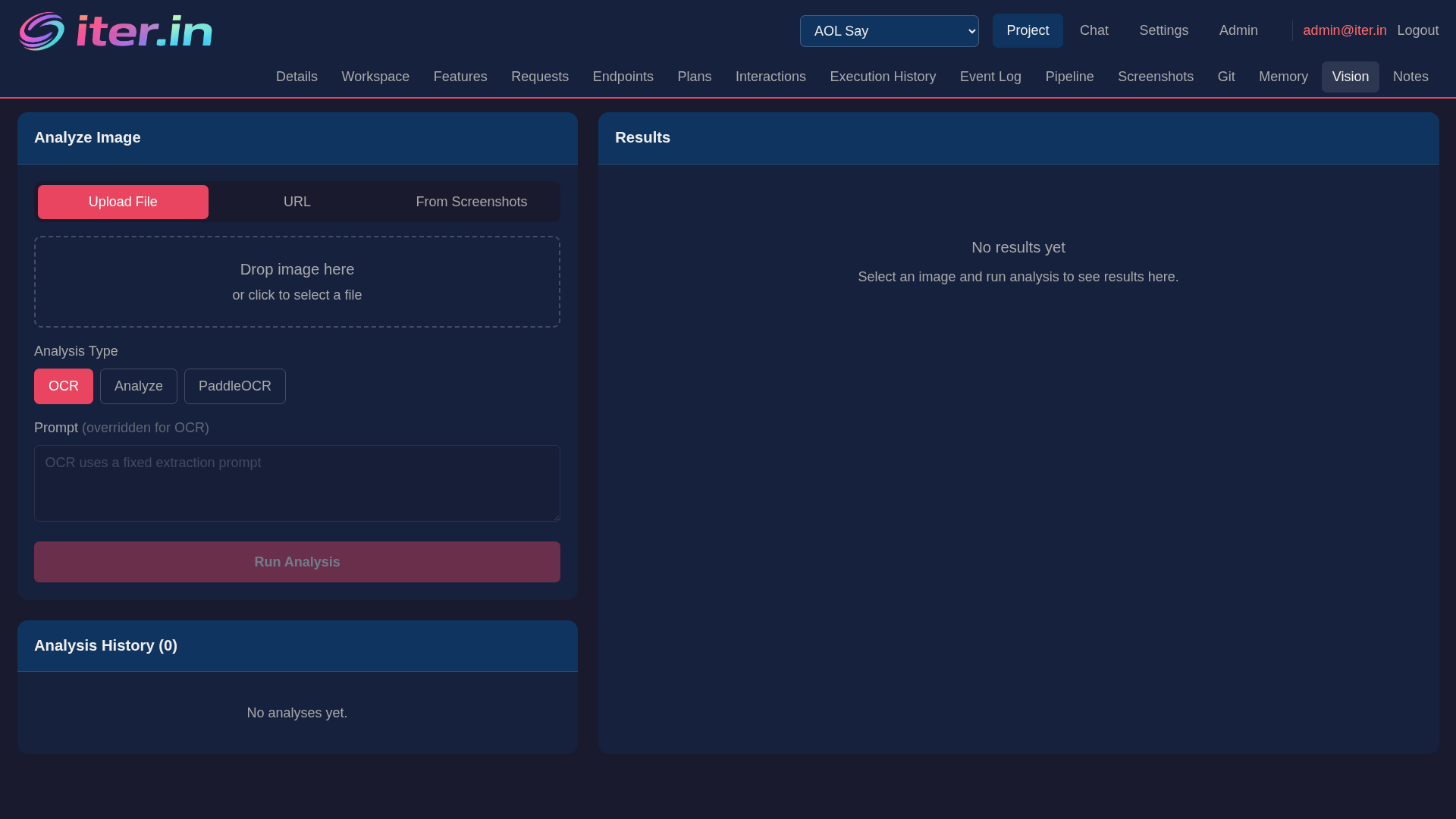

Vision & Voice

A vision server with PaddleOCR and vision-language models for image analysis. A voice server with streaming STT, multi-engine TTS, and NLP analysis — talk to your AI and hear it respond.

- PaddleOCR text extraction + qwen2.5vl vision-language analysis

- Streaming Whisper STT with NLP intent, sentiment, and entity recognition

- Multi-engine TTS (Piper, Coqui, Kokoro, Qwen3) with voice cloning

- Dedicated Voice Chat page with waveform visualization and auto-TTS

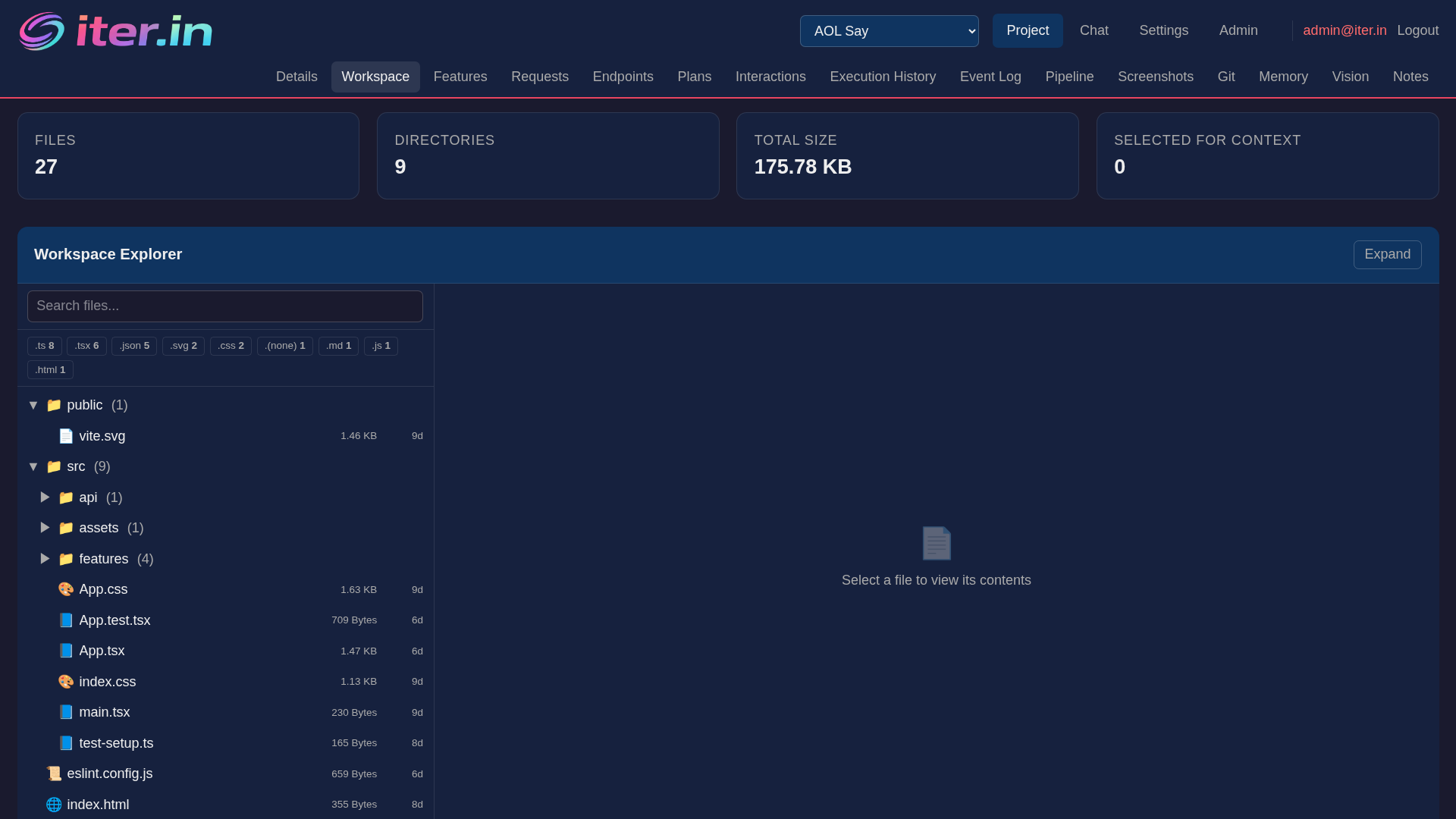

Workspace & File Context

Browse your project files, select context for prompts, and track which files each feature affects. Smart file filtering keeps prompts focused on the code that matters.

- File explorer with search and context selection

- Affected file tracking per feature request

- Automatic file enrichment from RAG and vector ranking

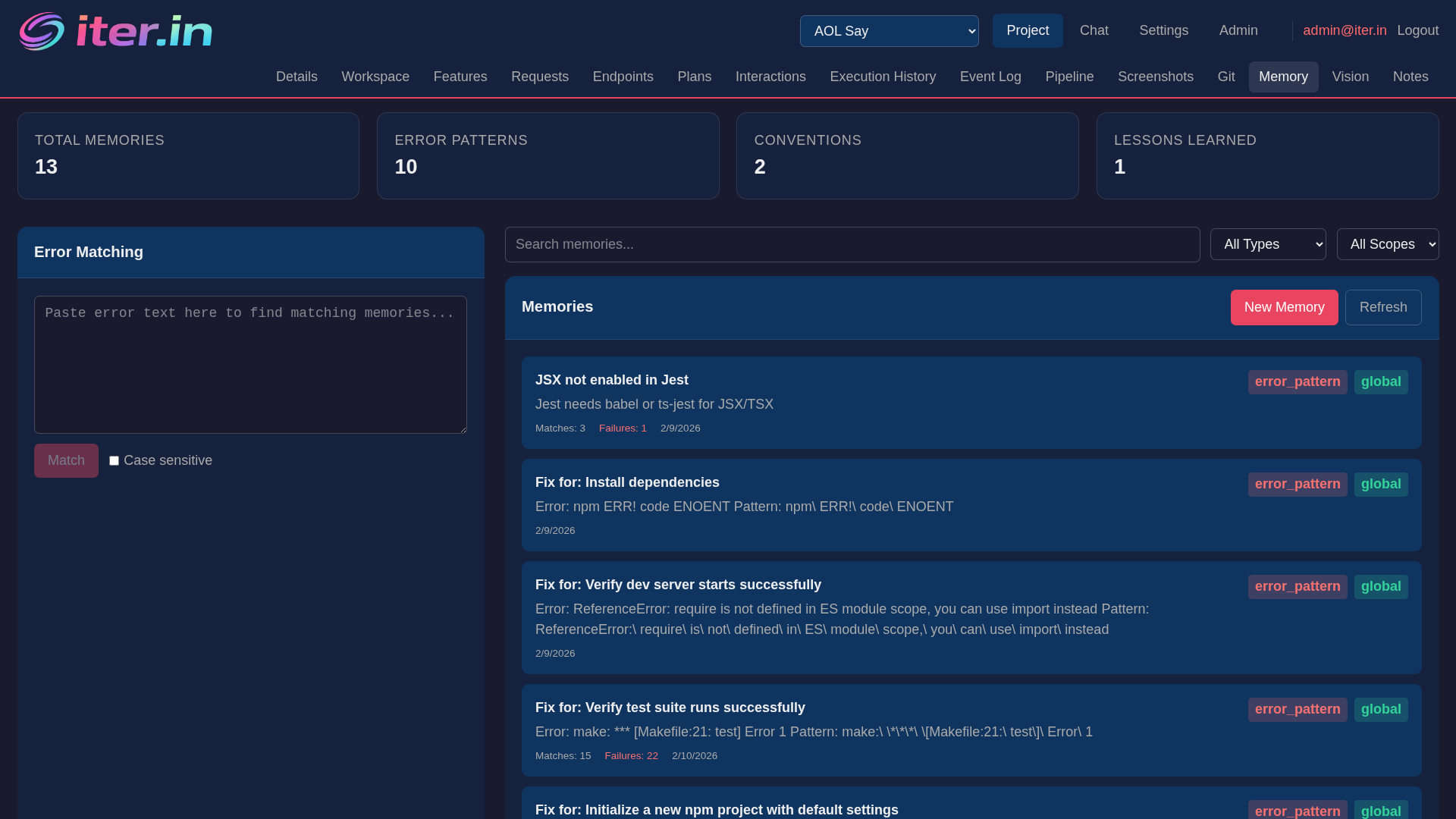

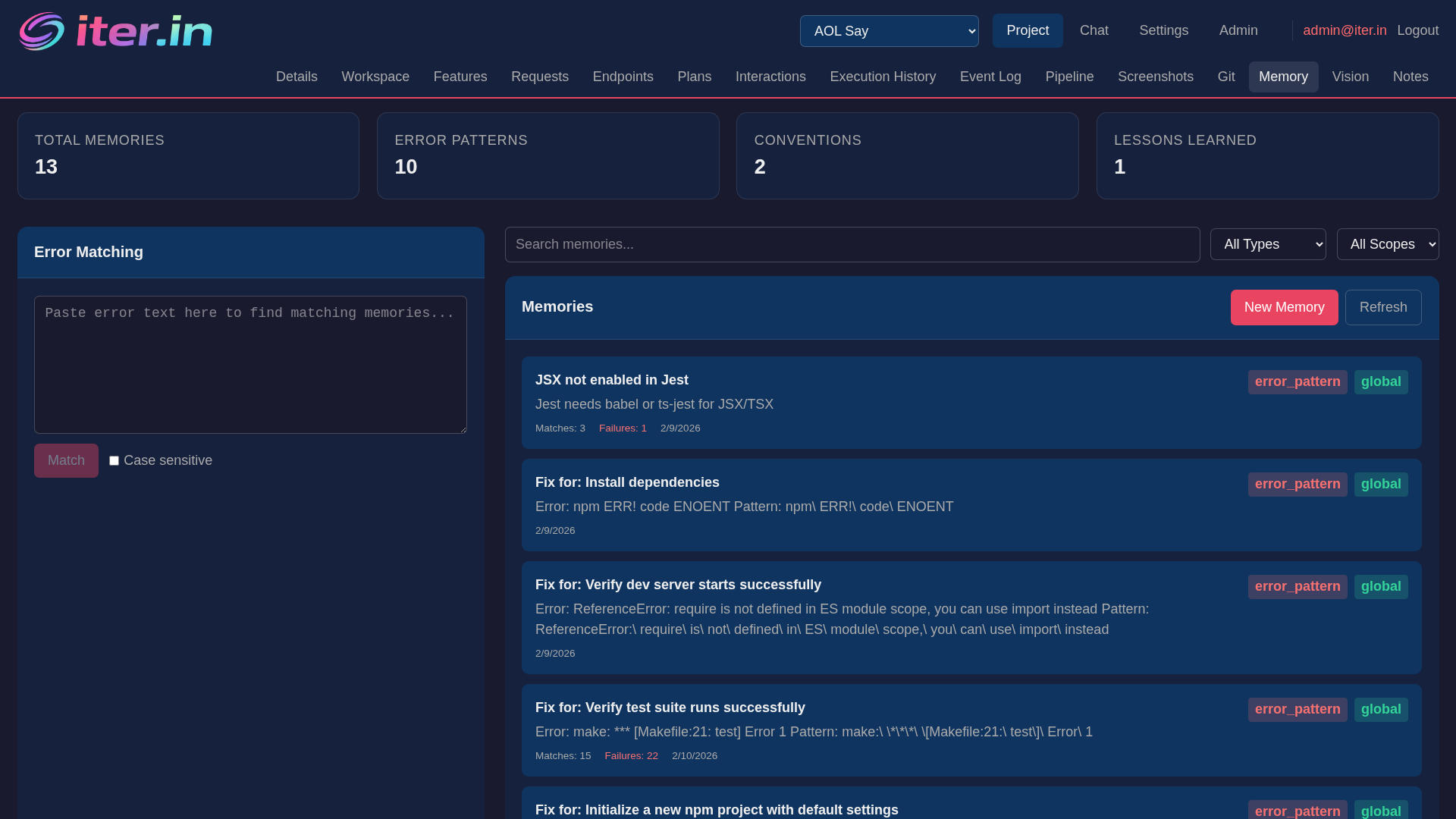

Memory & Error Recovery

Iter remembers errors it has seen before. When a step fails with a known pattern, it automatically recalls the fix - combining regex matching with semantic vector search for high-precision error recovery.

- Regex + vector hybrid matching for error patterns

- Automatic fix recall from previous successful resolutions

- Project-scoped memory with cross-project search

Feature & Request Tracking

Manage features, endpoints, and requests in a structured hierarchy. Each feature tracks its affected files, acceptance criteria, and linked endpoints - giving the AI precise context for implementation.

- Feature hierarchy with parent-child relationships and status tracking

- Endpoint registry with method, path, and feature linking

- Request queue with priority, acceptance criteria, and affected files

Mobile App

A React Native app that connects to your Iter workspace over your local network. Chat with your AI models, use voice input, and browse available hosts and models - all from your phone.

- Streaming chat with token-by-token SSE responses

- Voice input with Whisper STT and text-to-speech responses

- Smart context awareness for creating requests on the go

Dashboard, CLI & MCP Tools

A React dashboard for visual orchestration, a terminal-based agentic CLI with reasoning and file operations, and MCP tools for editor integration.

# Create a project and add a feature request

$ iter project create --name "my-api"

✓ Project created: my-api

$ iter request add --description "Add user authentication with JWT"

✓ Request added: req_01

# Orchestration runs the full pipeline automatically

$ iter orchestrate --request req_01

▶ Phase 1: Building prompt with project context...

▶ Phase 2: Streaming from qwen3-coder on judy...

▶ Phase 3: Pre-execution safety review... PASS

▶ Phase 4: Executing 12 steps...

▶ Phase 5: Post-execution review... PASS

✓ Request completed: 8 files created, 4 modifiedHow Iter compares

What sets Iter apart from project managers, LLM chat UIs, and AI coding agents.

vs Project Managers (Jira, Asana, monday)

- ● AI code generation with multi-phase pipeline

- ● Pre + post execution safety reviews

- ● 100% self-hosted, free forever

- ● Built-in auth, teams, orgs, and RBAC

vs LLM Interfaces (Open WebUI, LM Studio)

- ● Full code execution, not just chat

- ● Capability-based multi-model routing

- ● Automatic git branches + safe merge

- ● Native mobile app (iOS + Android)

vs AI Coding Agents (Cursor, Cline, Aider, OpenHands)

- ● Structured multi-phase pipeline with DAG execution

- ● 8-point safety review before execution

- ● Auto-fix with step tree injection + re-verify

- ● 100% local - no cloud API keys needed

Only in Iter

- ● RAG with ChromaDB semantic search + vector file ranking

- ● Vision (OCR + VLM), voice (STT + TTS + NLP)

- ● Agentic CLI with reasoning, sessions, file ops

- ● Evidence ledgers, portfolios, auth + RBAC, mobile app

Microservice architecture

10 Docker containers on a private bridge network. Each service has one job.

Agent Server

API gateway, project state management, prompt generation, and review logic.

Operator

Runs the multi-phase pipeline, manages orchestration lifecycle, connects directly to LLMs.

Executor

Stateless sandbox for shell commands and file operations. Process isolation for safety.

Self-Hosted

Full control. Run on your own infrastructure, manage your own models, keep everything on your network. Free and open-source forever.

Get started →Hosted (Coming Soon)

Zero setup. Managed agents, shared memory, team sync, and auto-updates. Same platform, none of the ops overhead.

Join the waitlist →Ready to own your development workflow?

Get started with Iter in minutes. Self-hosted, open-source, and designed for developers who want full control.